Fast,cost-optimizedLLM endpoints

Quickly evaluate and scale the latest models by leveraging OctoAI's singular API. Our deep expertise in model compilation, model curation, and ML systems expertise means you get low-latency, affordable endpoints that can handle any production workload.

Read about our customers

Capitol AI increases speeds by 4x and reduces costs by 75% on OctoAI

Run your choice of models and fine-tunes

Build on your choice of Qwen 1.5, Mixtral 8x22B, Nous Hermes 2 Pro Mistral, Mixtral 8x7B, Mistral, Llama 2, or Code Llama models on our blazing fast API endpoints. Scale seamlessly and reliably without dropping performance.

Migrate with Ease

OpenAI SDK users move to OctoAI's compatible API with minimal effort. See how.

Adaptive scalability

Growth-ready for your app

Robust reliability

Serving millions of customer inferences daily

Low cost with high performance

Keeping customers and the finance departments happy

from octoai.client import Client

client = Client()

completion = client.chat.completions.create(

messages=[

{

"role": "system",

"content": "You are a helpful assistant."

},

{

"role": "user",

"content": "Hello world"

}

],

model="mixtral-8x7b-instruct",

max_tokens=512,

presence_penalty=0,

temperature=0.1,

top_p=0.9,

)

Trusted by GenAI Innovators

“Working with the OctoAI team, we were able to quickly evaluate the new model, validate its performance through our proof of concept phase, and move the model to production. Mixtral on OctoAI serves a majority of the inferences and end player experiences on AI Dungeon today.”

Nick Walton

CEO & Co-Founder Latitude

“The LLM landscape is changing almost every day, and we need the flexibility to quickly select and test the latest options. OctoAI made it easy for us to evaluate a number of fine tuned model variants for our needs, identify the best one, and move it to production for our application.”

Matt Shumer

CEO & Co-Founder Otherside AI

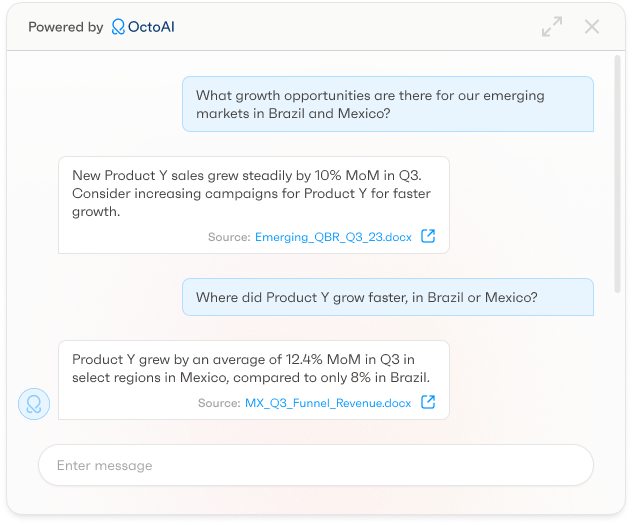

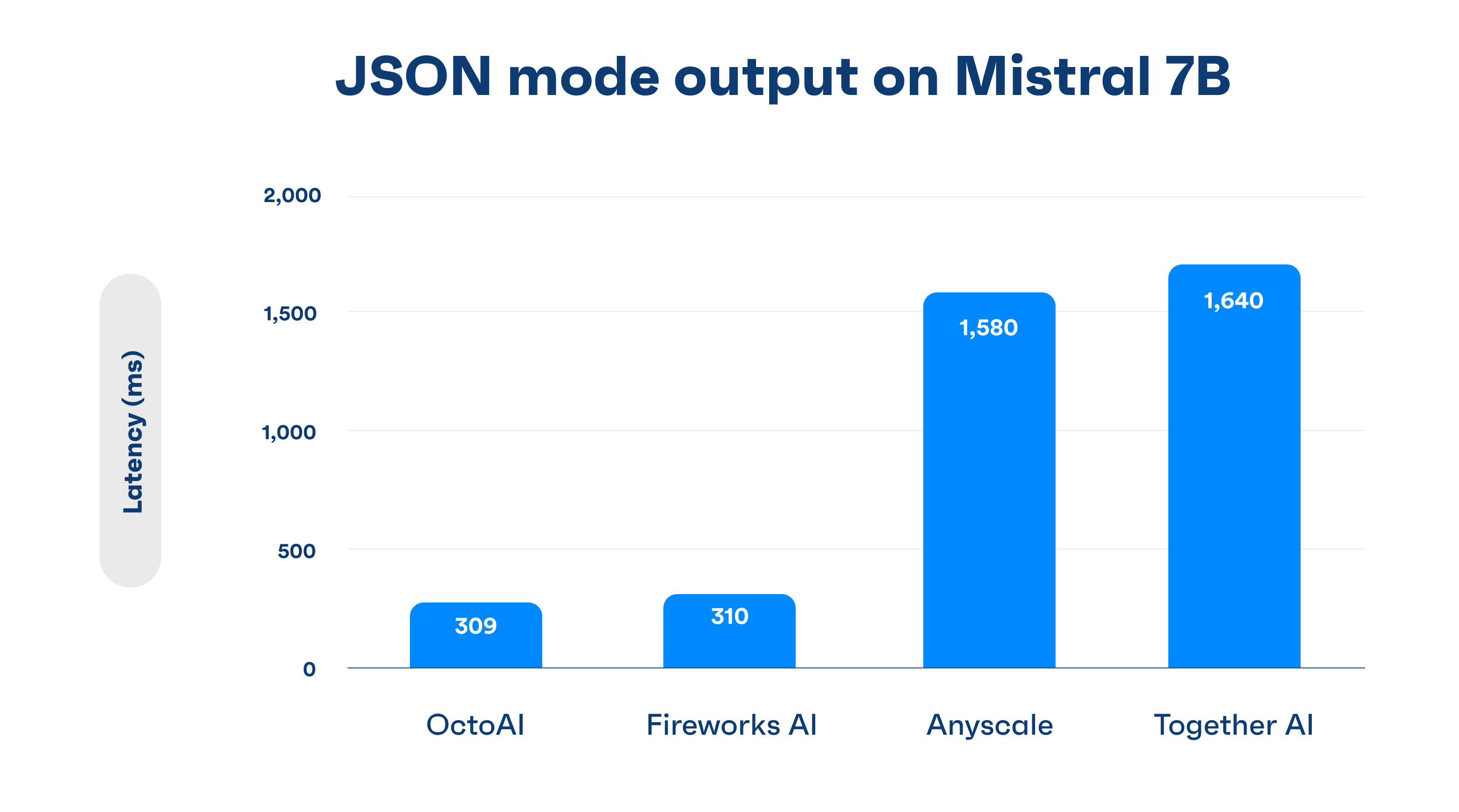

JSON mode for all LLMs on OctoAI

JSON mode is built into the OctoAI Systems Stack, allowing it to work with all LLMs with no disruptions or quality issues. OctoAI has pushed further and optimized JSON mode for industry leading latency performance.

Text embedding for RAG

Utilize GTE Large embedding endpoint to facilitate retrieval augmented generation (RAG) or semantic search for your apps. With a score of 63.13% on the MTEB leaderboard and and compatible API, migrating from OpenAI requires minimal code updates. Learn how.

Build using our high quality and cost effective Mixtral 8x7B models

Our accelerated Mixtral delivers quality competitive with GPT 3.5, but with open source flexibility. Enjoy reduced costs with our 4x lower price per token than GPT 3.5. Migrating is made easy with one unified OpenAI compatible API. We support fine-tunes from the community including the latest from Nous Research.

Build using multiple models for your use case

Using OctoAI you can link several generative models together to create a highly performant pipeline. You can build new experiences specifically for your industry needs using language, images, audio, or your own custom models. Learn how our customer, Capitol AI, was able to work with us to achieve cost savings on their multiple models in production.