We are very excited to announce that six months after our seed funding we have closed a Series A funding round of $15 million. This is a hard time to be positive about the future but we see very good things ahead. Machine learning and artificial intelligence will be key components of nearly every software application and it needs to be easy, efficient and portable. That is what we are making possible with OctoML.

We started the Apache TVM open source project about 4 years ago to bridge the gap between the Cambrian explosion of ML models and (another!) Cambrian explosion of HW targets like CPUs, GPUs, emerging AI chips, FPGAs, etc… Fast forwarding 4 years, Apache TVM supports all major HW targets and high-level ML frameworks and offers state of the art performance in most scenarios — in some cases with 10x+ performance gains. The TVM community is thriving with well over 300 contributors worldwide and buy-in from most major HW vendors, well on its way to becoming a defacto industry standard for ML model optimization and deployment. In fact, chances are you use products with models optimized by Apache TVM already, as Amazon (“Alexa” wake-up on smart speakers use a model optimized with TVM), Microsoft, Facebook, Qualcomm (“TVM is key to ML Access on Hexagon”), ARM, etc, are all users and contributors. Check out the videos and slides from the last TVM conference.

We believe that any data scientist or ML engineer should be able to easily deploy models without worrying about performance (or massive cloud bills!), model operator coverage or hardware lock-in. As machine learning models become an integral part of modern applications, pain points in optimizing, deploying and monitoring models are quickly becoming major limitations. Creating an ML model is just the first step — and often not the hardest. That is reserved for getting the model deployed efficiently onto the right hardware platform(s). The engineering effort involved in ML model deployment is high because of vendor-specific software stacks and gaps in operator coverage caused by fast model evolution. Also, ML is very resource hungry, leading to edge deployment limitations and ballooning cloud costs.

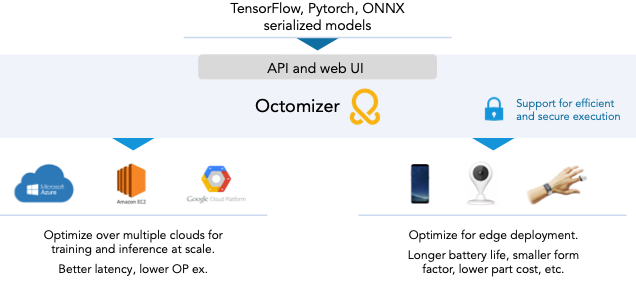

OctoML builds on Apache TVM to offer automated ML operations with a unified software foundation, for any model on any hardware. The “secret” sauce in our technology is to use ML to optimize ML, reducing the optimization and tuning time by orders of magnitude. Our core offering is the OctoML Platform, a SaaS platform that enables anyone to turn their ML models into highly optimized packages for deployment in the edge and in the cloud. The Octomizer increases portability, reduces the time taken to put models into actual use, reduces edge resource and HW costs, cloud bills, and enables new applications that weren’t possible before because of resource constraints like compute power, battery life, and “human patience” when models are too slow.

Octonauts!

In the past six months we have grown our team from six to twenty. We have brought on board some of the brightest minds in ML and systems, as well as experts in building massively successful platforms such as the Unity engine, Box, etc. The opportunity is clear, and our Series A funding will enable us to continue to grow our already world class team significantly, further strengthen TVM and the Octomizer platform, and work closely with our early customers. We are thrilled to work with Amplify Partners and Madrona Venture Group, both with significant experience and track record in the ML systems space, plus they are all-around terrific people!

Mike Dauber, General Partner at Amplify Partners

Matt Mcllwain, managing director at Madrona Venture Group

Reach out to us to sign up or to become an Octonaut!

Related Posts

The natural language processing (NLP) community has been transformed by the recent performance and versatility of transformer models from the deep learning research community...

Autoscheduling enables higher performance end to end model optimization from TVM, while also enabling users to write custom operators even easier than before.