OctoML expands deployment choice with new multi-cloud and edge targets

In this article

In this article

A major challenge we hear from our enterprise machine learning customers is managing the ever-growing hardware inference targets modern use cases demand. Although model training is still generally run on server-class GPUs, inference requires running the model efficiently on a variety of smartphones, server instances across multiple clouds, or new IoT and edge devices. This hardware explosion is often driven by the diverse models (vision, language, prediction) our customers need to support; some models have a better cost/performance ratio on specific hardware, but identifying the correct hardware is cumbersome and time consuming. As more organizations embrace a multi-cloud approach, the difficulty of hardware identification and benchmarking becomes even more challenging.

What these machine learning engineers need is the ability to easily target a wide variety of hardware, on the cloud and at the edge, across many model types, with the expectation of high performance across all of them.

We’re excited to announce we’ve taken a significant step forward in helping them do just that.

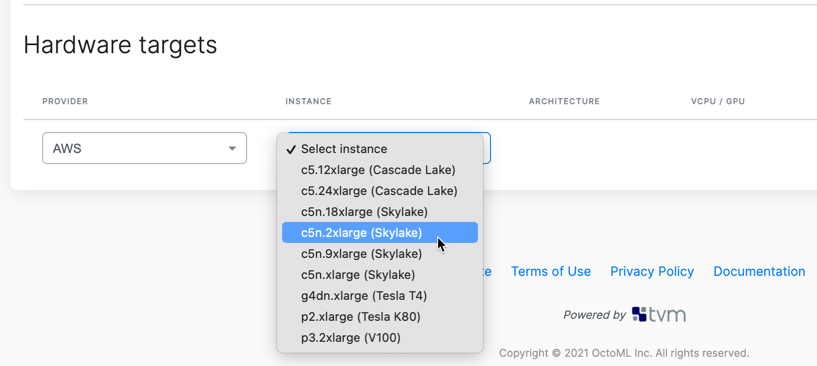

The OctoML Machine Learning Deployment Platform now supports inferencing targets from the cloud to the edge, including hardware from leading industry vendors like AWS, GCP, NVIDIA, AMD, Arm, and Intel. This significant expansion of targets provides our customers a platform that covers their diverse model needs with the breadth of choice they are seeking across a range of targets.

The OctoML platform is the only solution that offers customers a unified, ML deployment lifecycle that automates model performance acceleration, enables comparative benchmarking across cloud and edge stacks and provides ready-for-production packaging of accelerated models. All of this empowers machine learning engineers to dramatically reduce both deployment costs and time to production.

How our customers benefit from more choice

In sharing this product update, I also wanted to highlight the use cases from some of our early access customers that have benefited by having more hardware target choice.

The first class of customer use cases come from ML teams early in model development, where they are looking to identify the best hardware targets for their specific models. Given the degree of difficulty involved with hardware benchmarking and time to market constraints of their projects, many ML engineers end up relying on targets they know will work - even if they are expensive. Manual web research and limited evaluation of independent or vendor-provided data is a standard experience yet costly in both time and money. Even that level of effort often doesn’t explicitly get a customer what they need, which is how their specific model is going to perform in the real world on production hardware.

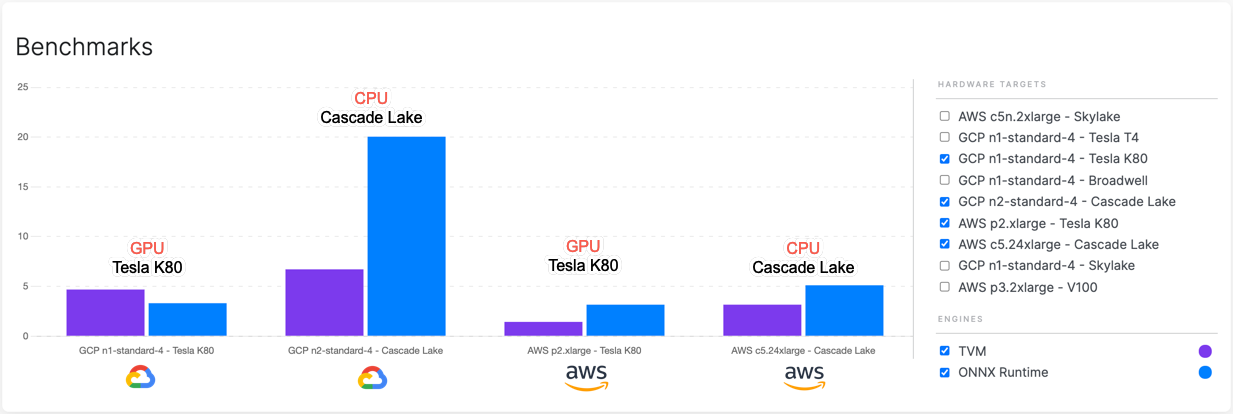

Benchmarking Report across 2 GCP instances and 2 AWS instances: TVM (purple bar) acceleration over ONNX Runtime (blue bar) for a SSD-MobileNet-v1 computer vision model (lower bars mean lower inference time, or faster acceleration)

As you can see from this screenshot of our Benchmarks UI, we are providing customers the key performance metrics they need to make an informed decision on the right hardware solution. Now our customers can have data-driven conversations with company leaders and product stakeholders and make the right business decisions based upon the user experience (tied to the performance they want to provide), the profitability targets of their product/service offering, and other critical dimensions.

The second customer pain point we’ve heard is from teams already deploying dozens of models into production across a diverse portfolio of hardware targets: from cloud to edge; from GPU to CPU. Before leaning on OctoML to address their deployment challenges, our customers were constrained in how to control ML operational costs on two dimensions:

Relying on different hardware vendors and/or cloud providers meant cobbling together redundant pipelines with different workflows. Each pipeline included its own unique set of supporting software libraries and complex manual processes, which created a very significant burden on machine learning engineers’ time.

With pressing product timelines and a vastly expanding universe of available potential hardware targets, engineers did not have the spare cycles to research and identify the best targets for a given application of the model they’ve created. For example, prior to working with our platform, one customer wasn’t able to explore the significant cost savings of moving offline inference from a GPU to a CPU instance.

Using OctoML’s automated performance acceleration for their proprietary models across a choice of hardware, with automated benchmarks, is exactly what they need.

If you are grappling with the issues described here, sign up for an early access trial of OctoML. AWS and GCP instances are currently supported with Azure support coming soon. We are also continually adding new targets. If you are interested in evaluating a specific hardware target, please let us know by signing up for an early access trial.

Related Posts

OctoML engineering collaborated with Microsoft Research on the “Watch For” project, an AI system for analyzing live video streams and identifying specified events within the streams.

We are thrilled to announce that OctoML has closed a $28M million Series B funding round led by Addition and Lee Fixel with participation from existing investors Madrona Venture Group and Amplify Partners.